Microsoft Forces Rival AI Models to Compete Inside Copilot: GPT Drafts, Claude Validates, Enterprise Wins

Microsoft Forces Rival AI Models to Compete Inside Copilot: GPT Drafts, Claude Validates

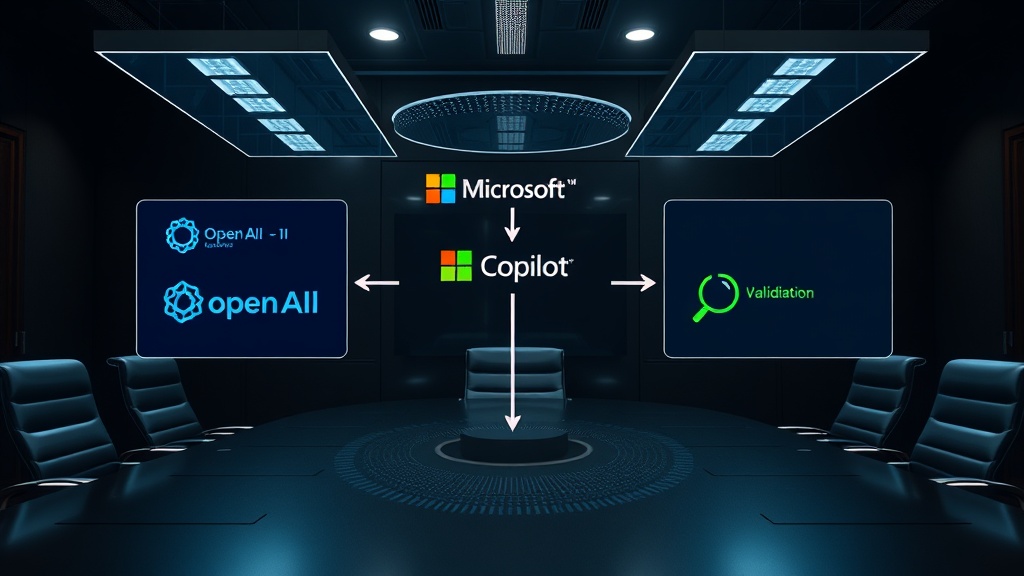

Microsoft 365 Copilot on March 30, 2026, became the first enterprise AI platform to weaponize competition between rival AI models for quality improvement. The feature—called Critique—pairs OpenAI's GPT model to draft research responses and Anthropic's Claude model to audit those responses for accuracy, completeness, and citation integrity before any user sees the output. This is not experimental research. It is production enterprise software deployed across a 450 million-user customer base with a 13.8 percent accuracy improvement over the best standalone competitor, Perplexity Deep Research.

Critique represents a fundamental architectural shift in how organizations deploy AI for knowledge work. Instead of betting on a single model vendor and accepting its failure modes, Microsoft has built an adjudication layer where conflicting AI systems cross-examine each other's outputs. The result is a structural improvement in reliability that directly addresses the primary enterprise adoption barrier: hallucination risk.

The Multi-Model Orchestration Architecture

The technical architecture behind Critique separates generation and validation into distinct computational lanes. GPT handles the exploratory phase—scanning sources, synthesizing information, generating structured research responses. Claude then operates as an adversarial reviewer, checking factual claims against source material, identifying overconfident assertions, validating citation chains, and flagging structural weaknesses in the synthesis.

This pattern mirrors peer review in academic publishing, except execution happens in seconds rather than weeks. The performance data is concrete: Researcher with Critique scored plus 7.0 points higher than Perplexity Deep Research running Claude Opus 4.6 on the DRACO benchmark, a standard for deep research accuracy encompassing 100 complex tasks across 10 domains published in February 2026.

The strategic implication extends beyond research workflows. Microsoft has effectively turned its former AI dependency—complete reliance on OpenAI—into competitive optionality. By building internal orchestration capabilities that incorporate Anthropic's Claude alongside OpenAI's GPT, Microsoft has created architectural resilience that protects its enterprise platform from single-vendor lock-in.

Model Council: Comparative Decision-Making at Scale

Critique is not Microsoft's only multi-model innovation. The company introduced Model Council, a capability that runs multiple AI models side by side on the same task and presents their outputs for comparison. This feature allows enterprise users to select the model best suited for specific task types—coding, analysis, creative writing—rather than accepting a one-size-fits-all default.

The competitive dynamics inside Microsoft's own platform are striking. Anthropic's Claude, which Microsoft invested one billion dollars to integrate into Copilot when the OpenAI relationship showed strain, now serves as a quality control mechanism for OpenAI's generated content. This represents a deliberate strategy: Microsoft ensures that its two most important AI partners compete within its ecosystem, preventing either from gaining negotiating leverage through exclusivity.

Multi-model orchestration is not a technical curiosity—it is a risk management framework for enterprises that cannot afford single-model failure modes. Organizations should treat AI model diversity the way they treat supplier diversification in traditional procurement.

Copilot Cowork: Agentic AI Enters the Enterprise Workplace

The second major announcement expanded access to Copilot Cowork, an agentic AI tool powered by Anthropic's Claude. Unlike traditional chat-based AI that waits for user prompts, Cowork operates autonomously across Microsoft 365 applications—planning budget reviews, managing calendar workflows, and executing multi-step tasks without continuous human intervention.

Cowork represents a fundamentally different usage paradigm from Copilot's existing chat interface. Users assign goals rather than issuing commands. The agent plans execution sequences, performs tasks across interconnected applications, and delivers results. Early adoption velocity has already exceeded Claude Code, Anthropic's developer-focused agent product, according to the company's Chief Business Officer.

The Microsoft 365 ecosystem provides Cowork with a structural advantage: over 450 million commercial seats integrated into connected applications—Outlook for communication context, Excel for data analysis, Teams for meeting intelligence, SharePoint for document management. An autonomous agent embedded at this integration depth has access to a breadth of enterprise data and workflows that standalone AI agents cannot match without significant partnership investment.

Below is the comparative assessment of where each major AI agent platform operates relative to enterprise integration depth.

| Platform | Core Model | Integration Scope | Autonomy Level | Primary Use Case |

|---|---|---|---|---|

| Copilot Cowork | Anthropic Claude | Full Microsoft 365 suite (450M seats) | High (goal-based task delegation) | Multi-step business workflows |

| Claude Code | Anthropic Claude | Code repositories, IDE environment | High (multi-step, multi-file editing) | Developer code generation |

| OpenAI Codex | OpenAI GPT-5.3 | IDE integration, API access | Medium (task execution with review) | Coding assistance |

| Copilot Critique | GPT + Claude | Research workflows, M365 documents | Low (draft-review cycle) | Accuracy-critical research |

| Gemini Workspace | Google Gemma | Google Workspace apps | Low-Medium (app-embedded prompts) | Workspace productivity |

| Cursor 3 | OpenAI + Anthropic | Independent IDE environment | High (agent-first coding) | Developer workflows |

Microsoft's strategic advantage is integration depth, not model quality. The platform advantage of 450 million pre-connected commercial users, unified data access across applications, and existing enterprise procurement relationships creates a moat that standalone AI agents struggle to overcome regardless of individual model capability.

The Hallucination War and Enterprise Trust

AI hallucination remains the single largest barrier to enterprise adoption. Gartner research consistently identifies accuracy concerns as the top reason companies delay AI deployment beyond pilot programs. The Critique architecture addresses this problem through a structural mechanism rather than a model improvement.

By separating generation and validation across different models trained on different datasets with different optimization objectives, the probability of both models making the same hallucination drops significantly. This is the enterprise equivalent of dual-key nuclear launch systems—critical actions require independent verification from distinct computational systems operating on different architectures.

The 13.8 percent accuracy improvement that Critique achieves is not marginal. In deep research contexts with thousands of citation points, this translates to hundreds of additional verified claims per research engagement. For enterprise use cases—legal research, compliance analysis, financial due diligence—each uncaught hallucination carries measurable financial and regulatory risk.

The following diagram maps the multi-model adjudication workflow that Microsoft has implemented inside Copilot:

graph TD

A["User Research Query"] --> B["GPT Draft Generation"]

B --> C["Source Material Compilation"]

C --> D["Claude Critique Review"]

D --> E{"Quality Threshold Met?"}

E -->|"No"| F["Revision Request to GPT"]

F --> B

E -->|"Yes"| G["Model Council Comparison"]

G --> H["Final Response Delivery"]

C --> G

D --> G

G -->|"Alternative Model"| I["Claude Draft Generation"]

I --> G

H --> J["13.8% Accuracy Improvement Over Best Single Model"]

This architecture represents a significant departure from the conventional single-model approach. Every major AI platform—OpenAI, Anthropic, Google—operates under an architecture where one model handles all reasoning for a given query. Microsoft has introduced adversarial validation as a production feature, which fundamentally changes the quality ceiling for generated outputs.

The Competitive Response Matrix

Microsoft's move forces every enterprise AI platform to respond. The table below maps the competitive landscape and the strategic options available to each major player.

| Platform | Microsoft Advantage | Vulnerability | Likely Response |

|---|---|---|---|

| OpenAI ChatGPT | GPT used but not as sole model | Revenue dependency on Microsoft | Push direct enterprise sales, super app consolidation |

| Anthropic Claude | Quality arbiter role in Copilot | Revenue dependency on Microsoft/Google | Independent enterprise go-to-market, direct Copilot replacement |

| Google Gemini | Google Workspace lacks agent orchestration | Late to enterprise AI agents | Multi-model integration, Gemini agent framework |

| Perplexity | Outperformed on DRACO by Microsoft | Narrow user base, no enterprise ecosystem | Deep research API, B2B analytics services |

| Cursor | Independent developer tool | No enterprise suite integration | Multi-model IDE, broader language support |

| Salesforce Slackbot | 30 new AI features, MCP integration | Limited to CRM ecosystem | Expand agent platform beyond CRM context |

Microsoft's positioning is now structurally superior to competitors who must choose between building multi-model capability from scratch or accepting single-model limitations. The company has already absorbed the engineering complexity of cross-model orchestration—a capability that requires significant investment in model API integration, response normalization, quality scoring, and latency management.

Financial Implications for Enterprise AI Buyers

The multi-model approach has direct cost implications. Running GPT for generation and Claude for validation doubles the per-query compute cost compared to single-model deployment. However, organizations must evaluate total cost per accurate decision rather than per-query cost.

Consider a legal research workflow consuming two hundred queries per month. At single-model accuracy of eighty percent, forty queries produce outputs containing factual errors requiring manual verification. At Critique's ninety-three percent accuracy, only fourteen queries contain errors. The labor cost of manual verification—estimated at fifteen minutes per caught error at standard knowledge worker rates—often exceeds the additional compute cost from running two models. For high-stakes workflows in legal, financial, and medical contexts, the accuracy premium becomes economically justified at moderate query volumes.

Strategic Actions for Q2 2026

Enterprise leaders should act on three fronts based on this development. First, reassess single-vendor AI strategies. Microsoft has demonstrated that the technical risk of depending on one model provider outweighs the procurement simplicity. Organizations using a single AI vendor face quality ceilings that multi-model orchestration has already surpassed. Second, evaluate agentic AI readiness. Copilot Cowork's rapid adoption indicates enterprise demand for autonomous workflow execution has reached commercial viability. Companies still testing chat-based AI should accelerate planning around agent delegation models. Third, audit existing AI accuracy requirements. The Critique architecture provides a benchmark: if your current AI tool operates below ninety-three percent accuracy on complex research tasks, a multi-model validation approach will improve outcomes at manageable incremental cost.

Microsoft's multi-model strategy is not about achieving incremental quality improvement. It is about fundamentally restructuring the enterprise AI market around orchestration capability rather than individual model performance. The company that controls the adjudication layer between competing models controls the enterprise AI stack—and Microsoft has just made the strongest claim to that position.

Stay ahead of the AI shift

Daily enterprise AI intelligence — the decisions, risks, and opportunities that matter. Delivered free to your inbox.